Kate, the Princess of Wales, launched a video final week by which she shared her most cancers analysis, which adopted main stomach surgical procedure in January. The assertion was supposed to not simply inform the general public but in addition put to relaxation rumors and hypothesis that had grown ever extra convoluted and obsessive since Britain’s future queen disappeared from public view following a proper look on Christmas Day.

Nonetheless, solely minutes after the video’s launch, social media swarmed with claims that the video was a pretend generated utilizing AI.

A few of that suspicion was comprehensible, contemplating {that a} current {photograph} launched by the royal household had been manipulated utilizing photo-editing instruments. Kate admitted as a lot earlier this month. However claims that the video was pretend continued even after BBC Studios confirmed its authenticity in the easiest way doable, not by way of some difficult evaluation of the imagery, however by making it clear that they filmed the video.

For years, when folks warned in opposition to the specter of AI instruments producing audio, pictures, and video, the most important concern was that these instruments is likely to be used to generate convincing fakes of public figures in compromising positions. However the flip facet of that chance could also be a good larger subject. Simply the existence of those instruments is threatening to deplatform actuality.

In one other current flap, “deepfake” instruments have been used to create pornographic pictures of music icon Taylor Swift. These pictures, which appear to have originated with an casual contest within the poisonous cesspool of the 4chan message board, unfold so shortly throughout the poorly moderated social media web site X (previously Twitter) that it was pressured to shut down searches involving Swift’s title whereas it regarded for a technique to take care of them. The photographs have been seen tens of millions of instances earlier than X’s intentionally weakened defenses managed to clear most of the pictures from the platform.

However what’s taking place with Kate bears an much more putting resemblance to a different case of actual video being referred to as out as pretend—and this one is a good greater sign of what’s forward as we roll towards Election Day.

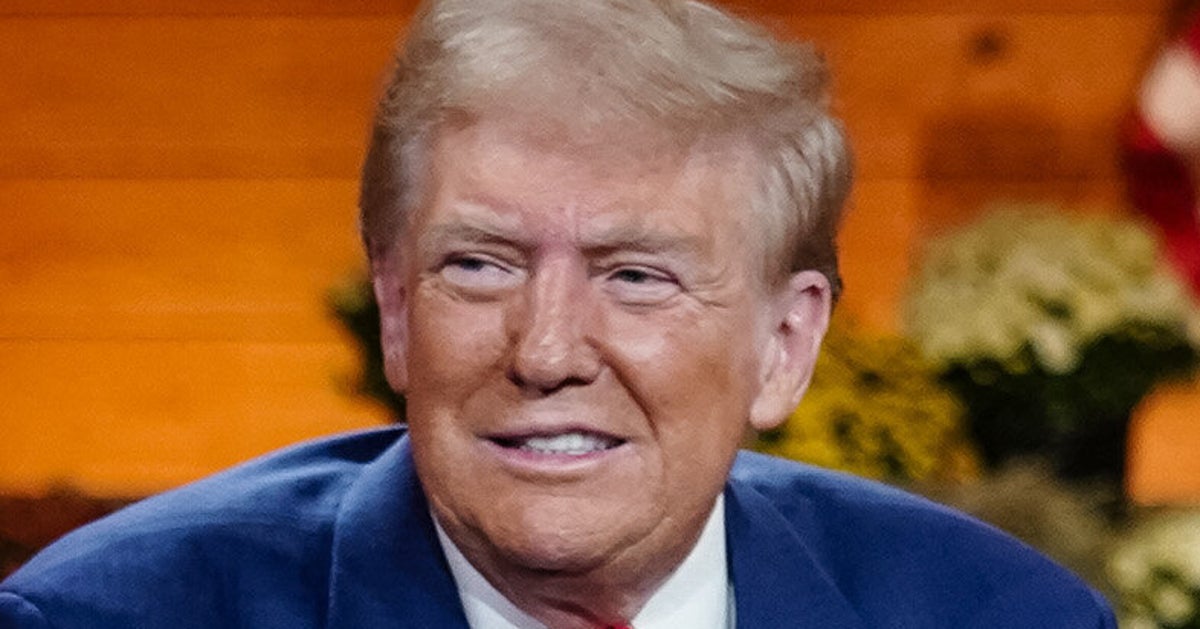

On March 12, Donald Trump posted on Reality Social, attacking Biden and a collection of movies.

The Hur Report was revealed immediately! A catastrophe for Biden, a two tiered commonplace of justice. Synthetic Intelligence was utilized by them in opposition to me of their movies of me. Can’t do this Joe!

Trump seems to be referring to a collection of 32 movies that have been proven in the course of the Home listening to by which former particular counsel Robert Hur testified. These movies, proven by Home Democrats, contained situations by which Trump didn’t recall the names of international leaders, mispronounced easy phrases (together with “United States”), referenced Barack Obama when he meant Joe Biden, and delivered a plethora of nonsensical asides. That final class included a declare that windmills have been killing whales.

All the movies have been actual clips taken from Trump’s public appearances. However his dismissal of them as being the product of AI exhibits simply how easy it’s to plant doubt about any occasion, irrespective of how public or well-documented.

For the previous yr, whilst AI picture era has steadily improved and AI movies have moved past laughable curiosities, there was a false confidence that the veracity of those pictures might at all times be discerned. Many individuals proceed to consider {that a} shut have a look at the eyes, limbs, or fingers in generated pictures will find some telltale flaws. Or that, even for the uncommon AI picture that may idiot the bare eye, some software program instrument would simply unravel the deception.

Wall Avenue Journal commentators are fast to level out points with these pattern movies created utilizing OpenAI’s Sora instrument. What they’re not mentioning is that that is very, very early work by this technique.

The period by which AI-generated imagery will be readily noticed is already fading. As the businesses and personalities behind these instruments are fond of claiming, these methods won’t ever be worse than they’re now. From right here, they’ll solely get higher. The photographs they create will solely get extra real looking and harder to separate from these generated utilizing a digicam aimed on the bodily world.

The road between what’s actual and pretend is changing into very blurry, in a short time. Nonetheless, even when it by no means fades fully, that will not matter. A lot much less subtle instruments from 5 years in the past couldn’t solely idiot folks but in addition erode belief when it got here to on-line imagery. Most individuals are merely not going to scrutinize every picture for flaws. Or dismiss claims that an actual picture is AI-generated.

Disinformation on social media isn’t simply driving up hate speech and racism on-line, it’s additionally a core a part of a declining perception in journalism. In line with a Pew Analysis Middle survey from 2022, adults below 30 had almost as a lot belief in what they learn on social media websites as they did in info from conventional information shops. Throughout all age teams, there was a steep decline of belief in nationwide information organizations throughout a interval of solely six years.

Social media, replete with attention-hungry trolls and Russian bot farms, turned social media websites right into a stew of conspiracy theories and disinformation. Now it appears not possible to go a day with out operating into situations of AI instruments getting used to create false narratives. That may be AI audio used to reportedly smear a highschool principal with faked recordings of racist and antisemitic remarks. A TikTok video purporting to seize the dialog between an emergency dispatcher and a survivor of the Francis Scott Key Bridge collapse went viral, regardless of being pretend. Or—and that is sadly actual—video adverts selling erectile dysfunction drugs and Russian dictator Vladimir Putin utilizing stolen pictures of on-line influencers.

That professional-Putin video is unlikely to be a coincidence. Simply as with different disinformation, Russia has been on the forefront of utilizing generative AI instruments as a part of its increasing disinformation campaigns. Even most of the rumors linked to Kate return to a Kremlin-linked group in a scheme that “appeared calculated to inflame divisions, deepen a way of chaos in society, and erode belief in establishments—on this case, the British royal household and the information media,” based on The New York Instances.

The time when AI instruments can be utilized to confidently generate a video of Joe Biden taking a bribe, election staff tossing Trump ballots within the trash, or Trump hitting a good golf shot could also be months away … nevertheless it’s not more than months. The mechanisms for figuring out and eradicating such convincing disinformation from social media usually are not solely weak, they’re largely nonexistent.

However even earlier than the flood of fakes arrives, we’re having to take care of what would be the extra debilitating impact of this bettering suite of instruments—a profound doubt concerning the statements, pictures, and movies which can be not pretend. We’re getting into a world the place there isn’t any agreed-on authority, not even the proof of your individual eyes.

Within the warfare on actuality, actuality is badly outnumbered.

One other particular election simply delivered nonetheless extra unhealthy information for the GOP, however Democrat Marilyn Lands’, nicely, landslide ought to actually have Republicans quaking. As we clarify on this week’s episode of The Downballot, this was the primary check of in vitro fertilization on the poll field because the Alabama Supreme Courtroom’s ruling that imperiled the process, and Republicans failed spectacularly—with dire implications for November.

Marketing campaign Motion